Amit Arole

January 29, 2021 | 5 minutes read

Clustering, in data mining, is the task of grouping set of objects in such a way that the objects in the same group are similar to each other. Clustering is one of the most widely used technique in the field of data science including machine learning, deep learning, statistics analysis, image processing, analytics and much more. K-means is a type of unsupervised learning, used to create groups of unlabeled data where k represents the number of groups. K-means clustering is a popular clustering technique which partitions the observation super-set into k number of clusters. Each cluster has a logical center called the centroid.

The solution below is a step forward to identify the most similar items for a given point within a cluster.

Here’s the entire process –

- Remove Outliers – Outliers can be defined as those points that do not follow the normal behavior pattern in the data set. These outliers need to be removed from the super set to get more accurate results. The outliers are deduced using statistical deviation from the mean in a normal distribution of the data set. More details can be found here.

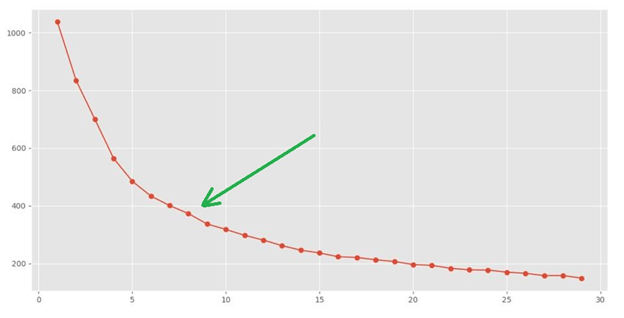

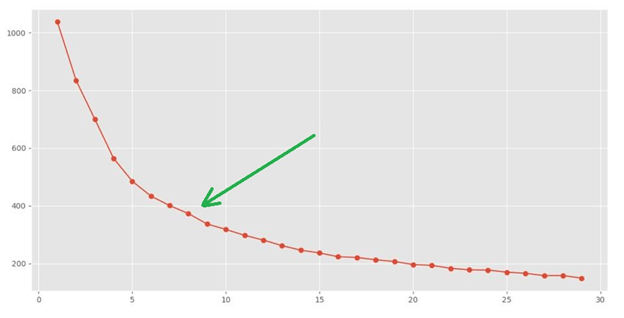

- Finding the right K in K-means – To find the right value for k, of all the techniques available, the Elbow method algorithm is one of the most widely adopted. Elbow method calculates the sum of squared errors for each k. As the value of k increases, the sum of squared errors reduces. At some point, this improvement will drop significantly creating the elbow. This point, at which the drop is significant is the correct value of K for that data set. For e.g. in the below figure, it is easy to deduce the value of K to be between 7 and 10.

- Compute the Clusters– We then execute the K-means clustering using the above value of K. This will club the similar data points into the same cluster. Each cluster has a center of its own called the cluster Centroid

- Finding most similar items in a cluster – Finding ‘most similar items’ to a chosen item in the data set is a very practical problem. To find an item with very similar features to a chosen one, we calculate the Euclidean distance between the chosen item and all other items in the given cluster. The most similar ones are those with the least distance between them and the chosen item.